Elevating Code Quality: Lessons from Our Review Process in ProvidenceAPI-Back

Even in fast-paced development, the foundation of a robust system lies in its code quality. For the ProvidenceAPI-Back project, we recently took a critical look at how we were ensuring this quality, particularly through our code review process. What we discovered, and subsequently refined, offered significant lessons in sustainable development.

The Situation

Initially, our approach to code reviews within ProvidenceAPI-Back was somewhat informal. Developers would submit pull requests, and reviews, while conducted, often varied in depth and consistency. Sometimes, reviews were rushed due to tight deadlines, or feedback was subjective rather than based on established guidelines. This led to an inconsistent codebase where some features were meticulously crafted, while others introduced minor technical debt or subtle bugs that only surfaced later.

The Challenge

This inconsistency began to manifest as an increasing number of issues reported post-deployment and a slower pace of development due to frequent refactoring. New team members found it challenging to understand the implicit quality expectations, leading to a steeper learning curve. The review process itself became a bottleneck, not because reviews were too strict, but because the lack of clear standards meant more back-and-forth, delaying merges and deployments.

The Realization

We realized that our code review process needed to evolve from a mere gatekeeping step to a foundational practice for knowledge sharing and quality assurance. It wasn't about finding every tiny flaw, but about fostering a collective ownership of the codebase and ensuring every contribution met a baseline standard of quality, maintainability, and correctness.

Our Approach to Better Reviews

To address these challenges, we implemented several key changes:

-

Established Clear Review Guidelines: We collaboratively defined a set of criteria for what constitutes a high-quality pull request. This included considerations for clarity, error handling, performance implications, adherence to architectural patterns, and test coverage.

-

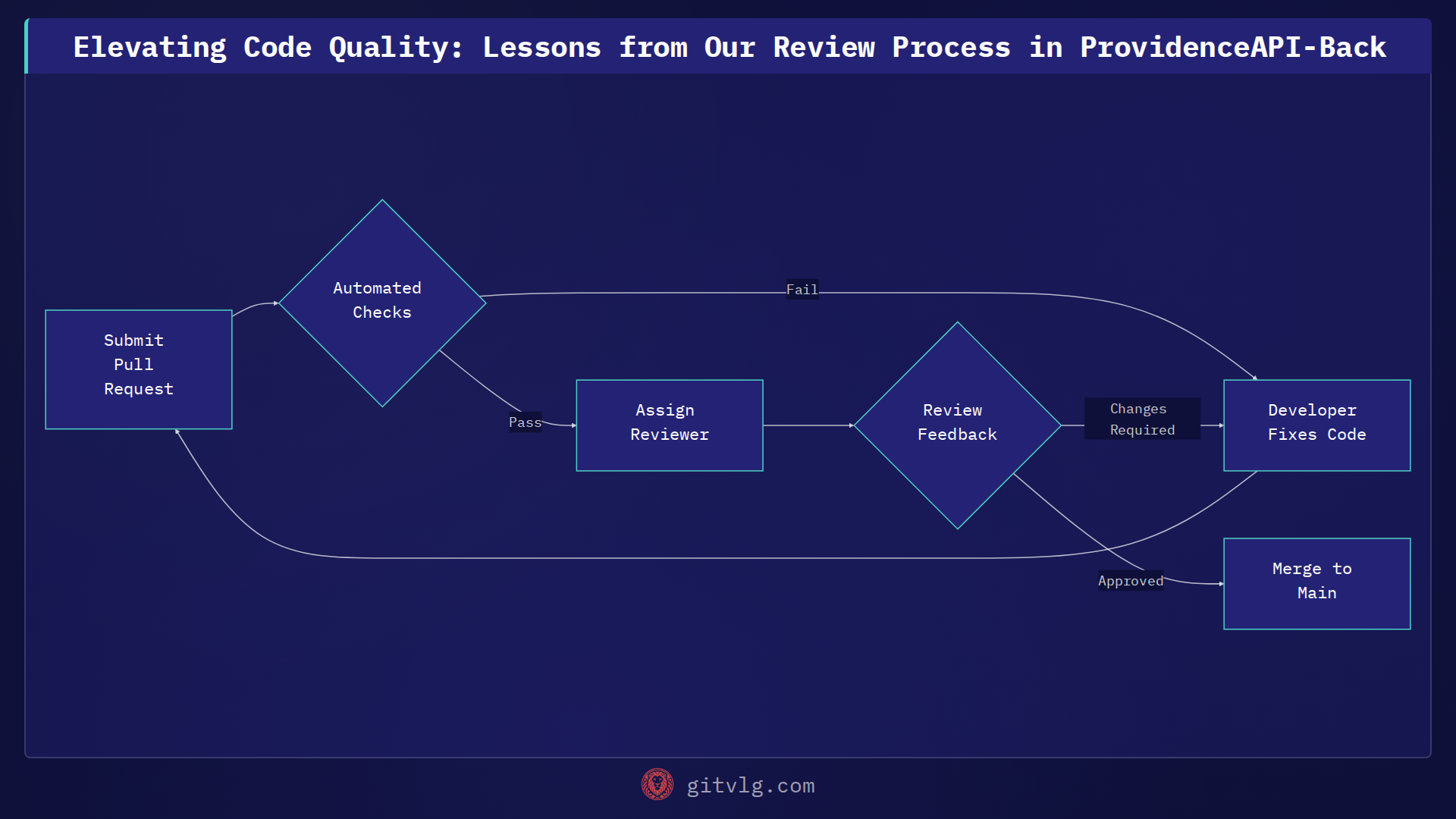

Automated Pre-Review Checks: Before a human reviewer even looked at the code, we integrated automated tools for linting, formatting, and basic static analysis. This offloaded repetitive checks, allowing human reviewers to focus on logic, architecture, and design patterns.

-

Promoted Shared Responsibility: We encouraged wider participation in reviews, ensuring that knowledge wasn't siloed. Cross-functional reviews also helped catch issues that might be overlooked by developers focused solely on the immediate feature.

-

Focused, Actionable Feedback: Reviewers were encouraged to provide constructive and specific feedback, explaining the 'why' behind suggestions rather than just the 'what'. This transformed reviews into learning opportunities.

The Outcome

The impact on the ProvidenceAPI-Back project has been significant. We've seen a noticeable improvement in overall code quality, a reduction in post-release defects, and faster integration of new features. Onboarding new developers has become smoother as clear guidelines provide a roadmap for contributing effectively. Furthermore, the collaborative nature of enhanced reviews has strengthened team cohesion and shared understanding of the system.

Key Takeaways

Investing time in refining your code review process is not just about catching bugs; it's about building a more resilient, maintainable, and collaborative development environment. For ProvidenceAPI-Back, it transformed code reviews from a potential bottleneck into a powerful engine for continuous improvement and shared excellence.

Generated with Gitvlg.com