Optimizing API Performance Through Caching Strategies

Introduction

In high-traffic APIs, response times can quickly degrade if data is fetched repeatedly from the same source. Caching is a fundamental technique to mitigate this, reducing latency and improving overall performance. This post explores strategies for effectively caching API responses.

What is API Caching?

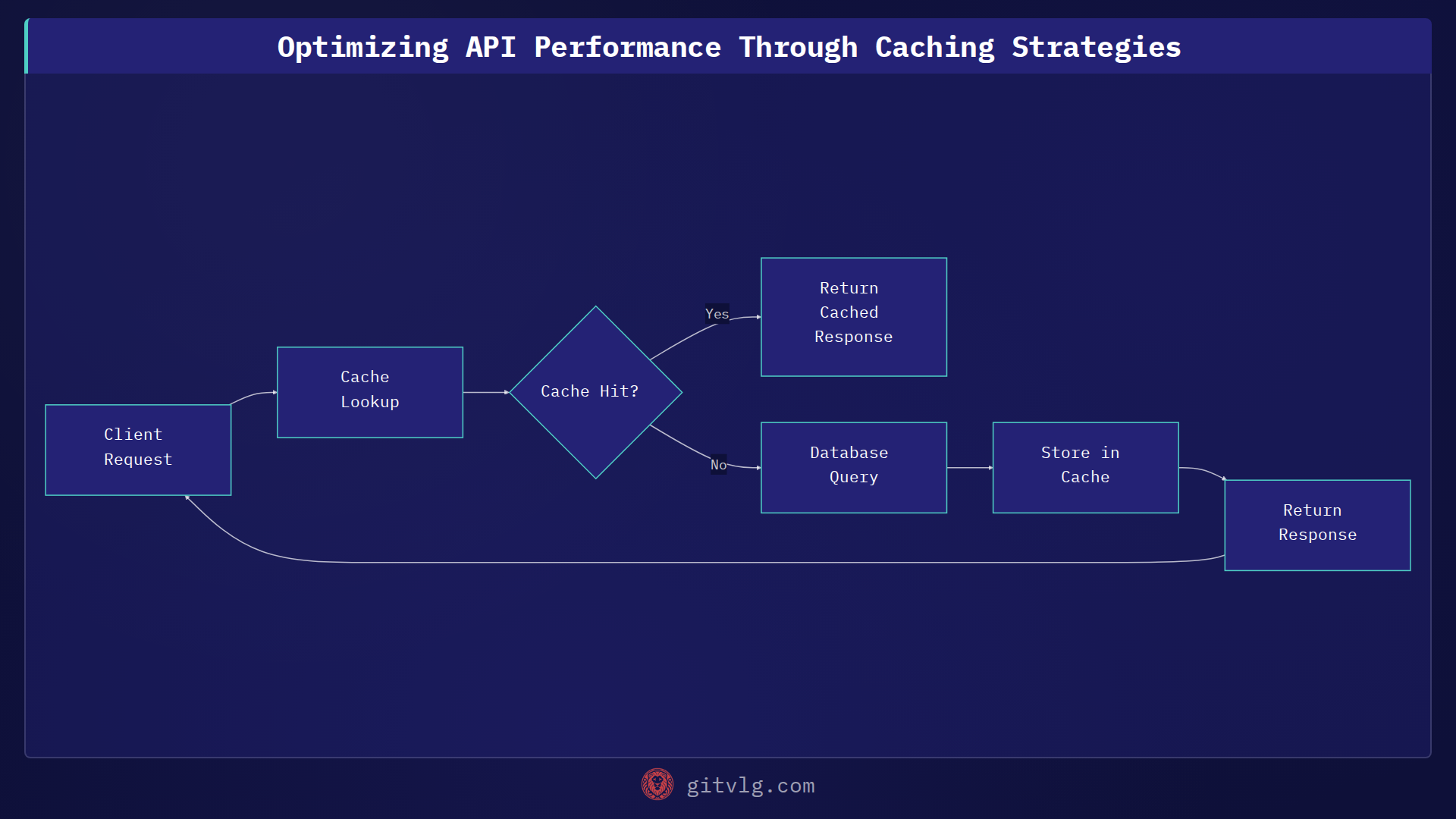

API caching involves storing responses to frequently requested API endpoints. Subsequent requests can then be served directly from the cache, bypassing the need to re-execute the original query or computation. This drastically reduces server load and improves response times.

Common Caching Locations

Caching can be implemented at various layers within the architecture:

- Client-Side Caching: Browsers and mobile apps can cache API responses based on HTTP headers.

- CDN (Content Delivery Network): CDNs cache content geographically closer to users, reducing network latency.

- Reverse Proxy Cache: Services like Nginx or Varnish can cache responses before they reach the application server.

- In-Memory Cache: Using in-memory stores like Redis or Memcached offers the fastest retrieval times.

Cache Invalidation Strategies

Choosing the right cache invalidation strategy is crucial to ensure data consistency:

- Time-Based Expiration (TTL): Simplest approach; cache entries expire after a fixed duration. Suitable for data that doesn't change frequently.

- Event-Based Invalidation: Cache entries are invalidated when the underlying data changes. Requires a mechanism to detect data updates (e.g., webhooks, database triggers).

- Manual Invalidation: Explicitly invalidate cache entries through an API or administrative interface.

A Practical Example

Consider an API endpoint that retrieves user profile information. Without caching, each request hits the database:

// Without caching: direct database query

function getUserProfile(userId) {

return database.query('SELECT * FROM user_profiles WHERE id = ?', userId);

}

With caching, we can store the profile in an in-memory cache (e.g., Redis):

// With caching: check cache first, then database

async function getUserProfile(userId) {

const cacheKey = `user:${userId}:profile`;

let profile = await redis.get(cacheKey);

if (!profile) {

profile = await database.query('SELECT * FROM user_profiles WHERE id = ?', userId);

await redis.set(cacheKey, JSON.stringify(profile), 'EX', 3600); // Expire after 1 hour

}

return JSON.parse(profile);

}

Monitoring Cache Performance

It's essential to monitor cache hit rates and eviction rates to optimize cache configurations. Tools like Prometheus and Grafana can visualize these metrics.

Conclusion

Caching is a powerful technique for optimizing API performance. By strategically caching API responses, you can reduce latency, decrease server load, and improve the overall user experience. Experiment with different caching locations and invalidation strategies to find the best fit for your application. Start by implementing time-based expiration for read-heavy endpoints and monitor the impact on performance.

Generated with Gitvlg.com